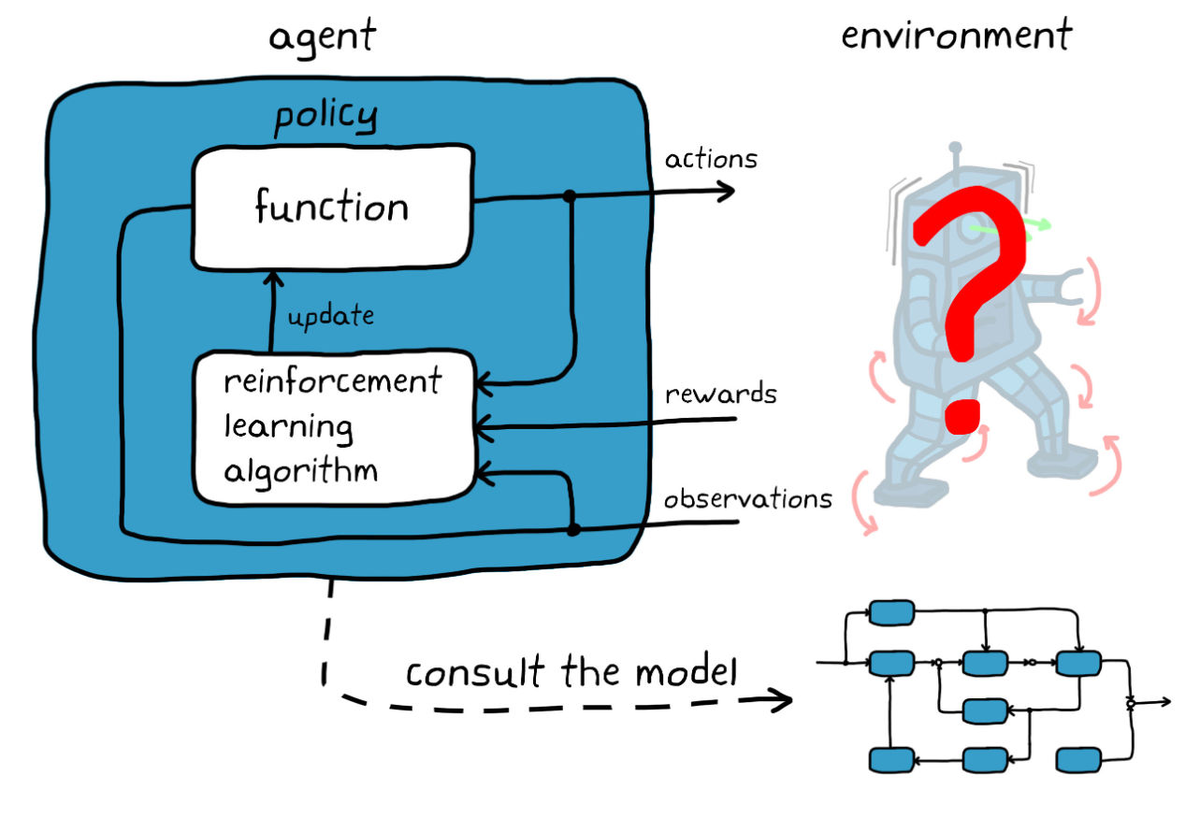

A Coding Implementation to Train Safety-Critical Reinforcement Learning Agents Offline Using Conservative Q-Learning with d3rlpy and Fixed Historical Data

The Avocado Pit (TL;DR)

- 🥑 Offline learning for RL agents? Yes, it's a thing with Conservative Q-Learning and d3rlpy.

- 🛤️ Train safely without live data, keeping the chaos minimal and the results impressive.

- 🧠 Mix fixed historical data and smart coding to create a secure learning environment.

Why It Matters

If you've ever wished your AI agent could learn without tearing up your virtual living room, today is your lucky day. Training reinforcement learning (RL) agents offline allows us to keep the chaos in check while ensuring our digital protégés learn their way around the world safely. It's like teaching a toddler to walk in a padded room—without the bumps.

What This Means for You

For developers and AI enthusiasts, this means less wear and tear (literally) on your training environments. Using offline data with Conservative Q-Learning, you can build robust RL models without the need for risky real-time explorations. In short, your agents get smarter without the side of panic-inducing errors.

The Source Code (Summary)

MarkTechPost's recent article dives into an intriguing tutorial on building a safety-critical RL pipeline. The method? Train agents offline using Conservative Q-Learning via d3rlpy and fixed historical data. This approach is particularly handy for scenarios where live data exploration is a no-go. The tutorial guides you through creating a custom environment, generating a behavior dataset, and then training both a Behavior Cloning baseline and a Conservative Q-Learning agent. Talk about efficient and safe learning!

Fresh Take

In a world where AI can sometimes act like a bull in a china shop, Conservative Q-Learning is the tranquilizer that keeps things civil. By leveraging offline datasets, developers can not only avoid disasters but also fine-tune their models to perfection. Who knew that sometimes the best way forward is to take a few steps back and learn from the past?

Read the full MarkTechPost article → Click here